Loading...

Founder's Letter

It all started in January of 2018. My team and I had just completed the build-out of our third datacenter in 12 months. We had just begun moving production workloads when a VMware NSX bug brought everything crashing down. For the next 22 hours we worked to restore operations.

It all started in January of 2018. My team and I had just completed the build-out of our third datacenter in 12 months. We had just begun moving production workloads when a VMware NSX bug brought everything crashing down. For the next 22 hours we worked to restore operations.

That was only the beginning of a very painful year. Over the next several months, we suffered additional datacenter outages due to the likes of a NetApp QoS bug, a Cisco ASA bug, and others.

I spoke with industry peers and heard similar horror stories. It was comforting to hear that I was not alone, but... was this just how it had to be? All I could think was:

"Are all IT Operations professionals doomed to suffer like this during their careers?"

Over the next few months, I was spending a considerable amount of time searching for solutions. I asked several industry colleagues what they were doing to mitigate this risk and found no answers. By August, I realized there was no product available that would help prevent 2AM datacenter outages.

So, I decided to build one. My team and I cobbled together a semi-automated process that was better than the current, manual industry-standard processes.

After the initial outages, Cisco offered to set us up with a Technical Account Manager. They would pick up the phone and call someone when they released a new bug. But how was this any better than getting more emails in our inboxes?

My team were some of the best and brightest, but like many IT teams, we were understaffed relative to the expectations of the business. This issue was too complex for humans to solve without help. They needed something more than spreadsheets and an email inbox to manage the thousands of different risks.

"There had to be a better way."

Finally, in late 2018 at the Gartner Infrastructure and Operations conference, I had a light-bulb moment. Everyone I spoke to had similar stories to mine. When I described what we had recently built, nearly everyone remarked how much better our solution was compared to the status quo.

"I came to the realization that I could help others avoid the pain I had experienced."

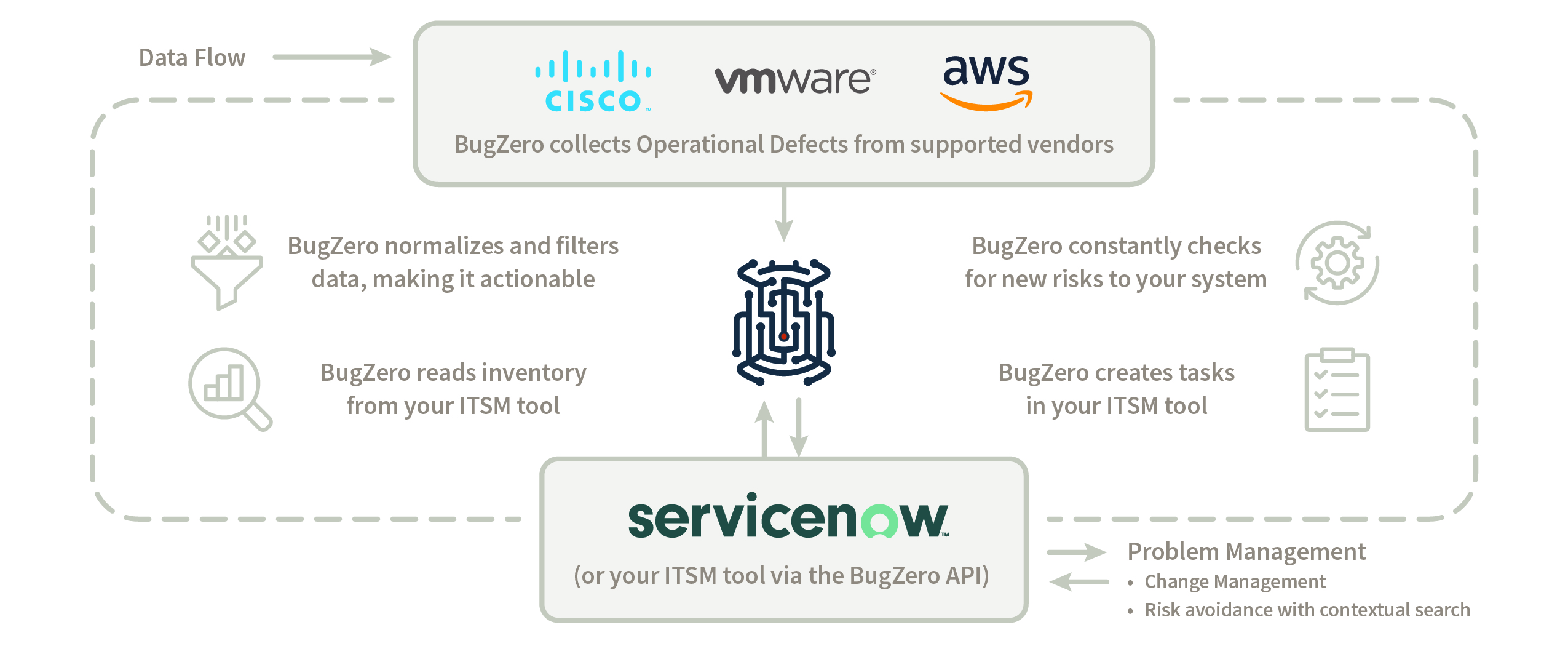

For decades, we’ve had monitoring tools tell us when a hard drive fails or a service in an OS stops working. Why don’t we have a monitoring tool that tells us when the software running the storage or database is broken? The forums have postings about such bugs, but how do you even manage forums?

Related Reading

Subscribe to our Zero Defect Digest

Sign up to receive a monthly email with stories and guidance on getting proactive with vendor risk

BugZero requires your corporate email address to provide you with updates and insights about the BugZero solution, Operational Defect Database (ODD), and other IT Operational Resilience matters. As fellow IT people, we hate spam too. We prioritize the security of your personal information and will only reach out only once a month with pertinent and valuable content.

You may unsubscribe from these communications at anytime. For information on how to unsubscribe, as well as our privacy practices and commitment to protecting your privacy, check out our Privacy Policy.